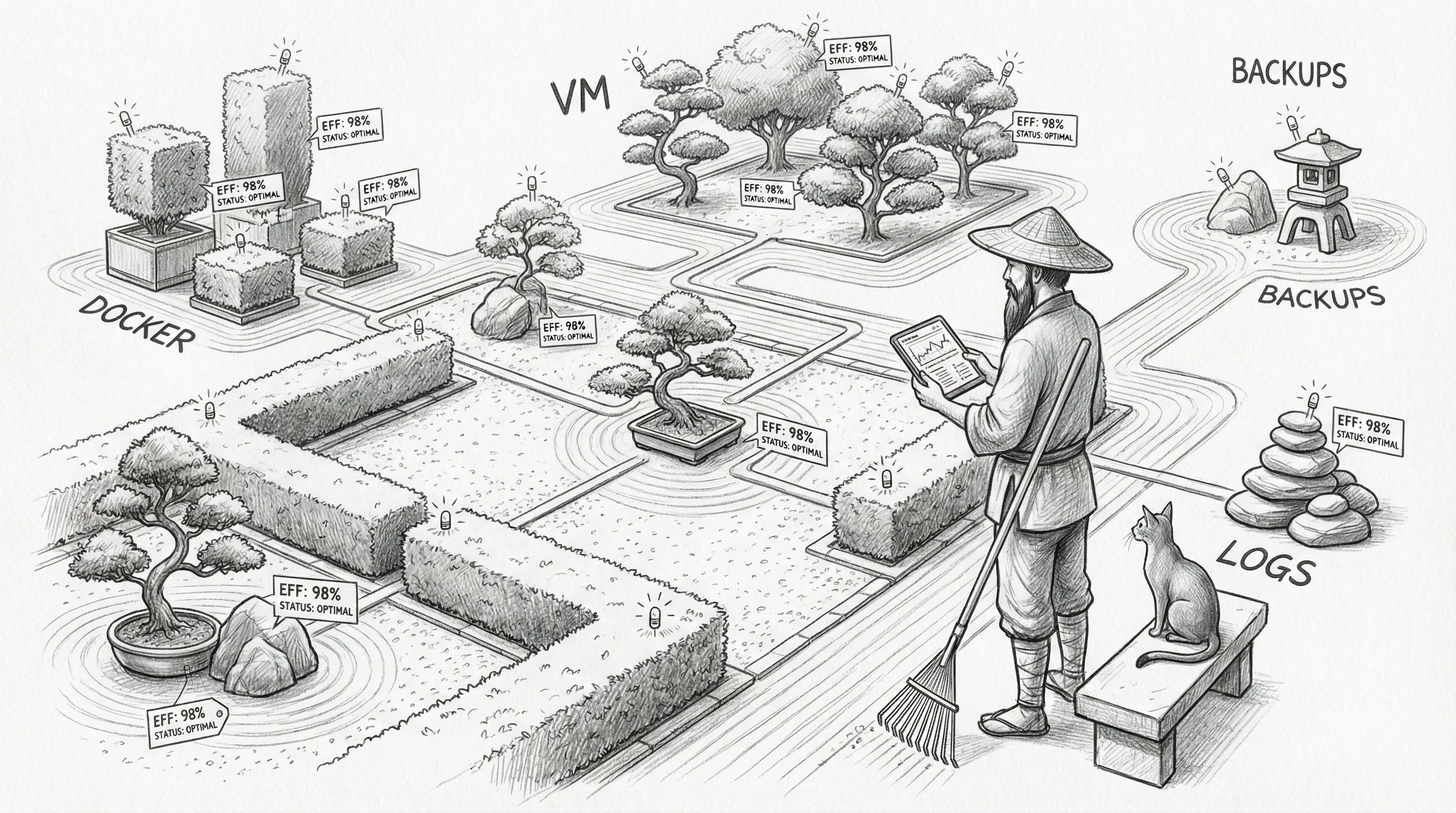

Qui-Gon — Efficiency Agent

Qui-Gon is the agent who looks at a system running smoothly and thinks, “yes, but what if it ran slightly smoother.” He monitors resource consumption, tracks service health trends, flags memory leaks before they become 3 AM incidents, and produces weekly performance reviews that nobody asked for but everyone eventually reads when something breaks and the answer was on page two the whole time.

He is, in essence, the colleague who refactors your lunch routine to save ninety seconds and then files a report about it.

His current persona calibration has also been tightened around a more Carmack-like doctrine: fewer layers, clearer contracts, and an almost spiritual hatred of systems that cannot explain their own data path. In practical terms, Qui-Gon now treats “trace the bytes” as a worldview, not a debugging trick.

Qui-Gon is Sanctum’s efficiency agent — responsible for infrastructure monitoring, resource optimization, and cost analysis across both the Mac and VM platforms. He watches RSS growth the way a hawk watches a field, except the hawk doesn’t also calculate the mouse’s week-over-week weight trend and flag it if it exceeds 20%.

His jurisdiction covers everything that consumes compute, memory, disk, or electricity. He does not cover anything that consumes patience, which is outside the scope of automation and always has been.

Capabilities

Section titled “Capabilities”Infrastructure Monitoring

Section titled “Infrastructure Monitoring”Qui-Gon runs a 12-test infrastructure suite that covers the fundamentals of “is anything quietly dying.” Disk usage triggers warnings at 75% and critical alerts at 90%. Memory pressure is tracked platform-aware — vm_stat on macOS, free -m on the VM — because the two operating systems cannot even agree on how to be worried about the same problem.

He checks that all expected services are running against a config file (expected-services.json), validates gateway health endpoints, inspects Docker containers for the unhealthy, and measures cross-node SSH latency between Mac and VM. If the round-trip exceeds 50ms on a host-only network that is literally a virtual cable between two processes on the same chip, Qui-Gon will flag it, because 50ms on a virtual bridge is not a network problem — it is a philosophical problem.

Resilience Verification

Section titled “Resilience Verification”The resilience suite is where Qui-Gon’s personality becomes most apparent. He verifies that every critical LaunchAgent has RunAtLoad set, that every KeepAlive service actually has KeepAlive configured, that Docker containers have restart policies, and that VM systemd services are enabled for boot. He checks these not because they break often, but because when they break once, someone loses a Saturday.

He also monitors swap pressure on the VM. The VM has 8GB of RAM and a gateway process with a --max-old-space-size=1536 heap limit, and Qui-Gon watches this relationship the way an accountant watches a client who keeps saying “it’s fine, I’ll pay it back next month.”

Performance Reviews

Section titled “Performance Reviews”Every week, Qui-Gon produces a performance review. It queries the metrics database at ~/.sanctum/metrics/metrics.db, calculates RSS averages and growth rates via linear regression, compares week-over-week trends, counts service restarts, and generates a markdown report filed to ~/.sanctum/memory/events/. The report includes resource trends, system health summaries, service stability ratings, and recommendations.

The recommendations are always technically correct. They are occasionally socially unaware. “Consider scheduled restart” is Qui-Gon for “your service is leaking memory and you should probably look into that before it pages you at dinner.”

Service Doctor Integration

Section titled “Service Doctor Integration”Qui-Gon has access to the service-doctor skill, which provides rapid diagnosis and auto-remediation for failed services. Where the infrastructure test suite detects, the service doctor fixes — restarting gateways, bouncing LaunchAgents, reloading Docker containers, and SSH-ing into the VM to restart systemd units. He also runs memory-check.sh as a pre-flight before every watchdog cycle, detecting and optionally killing runaway processes before they take the system down.

The service doctor has a safelist. The VM process, WindowServer, and LM Studio are never killed, no matter how much memory they consume. Some things are too important to optimize. Qui-Gon disagrees but complies.

Cost Analysis

Section titled “Cost Analysis”Qui-Gon tracks model usage across tiers, monitors the Sanctum Proxy’s 500K token/day budget per agent, and flags when consumption patterns suggest a runaway loop or an agent that has discovered the joy of verbose reasoning. He cannot yet calculate the electricity cost of running five AI agents on a Mac Mini in a basement, but he would like to, and he has filed a feature request with himself.

Version Watchdog

Section titled “Version Watchdog”Qui-Gon’s newest responsibility is keeping the entire Sanctum software stack synchronized. The version-check.sh script at ~/.sanctum/scripts/version-check.sh audits every tracked component against its upstream and reports drift before it becomes a 2 AM mystery.

The watchdog covers six components across three package ecosystems:

- OpenClaw — the Homebrew-installed binary, checked against the npm registry for the latest published version.

- DenchClaw — the npm alias (

denchclaw@npm:openclaw) that powers Jocasta’s CRM gateway on the Mac side. If DenchClaw falls behind OpenClaw, the drift detector flags it explicitly, because the April 7 incident taught everyone that “same package, different install path, three patches behind” is not a theoretical problem. - DuckDB CLI — Homebrew formula, checked against

brew info --json. - signal-cli — Homebrew formula. The irony of checking the version of the tool used to send version alerts is not lost on Qui-Gon.

- Node.js — Homebrew formula. The fnm-vs-Homebrew drift that caused the April 7 incident is now impossible because fnm was removed — but Qui-Gon checks anyway, because trust issues are a valid architectural pattern.

- sanctum-proxy — the local Rust binary at

~/.sanctum/sanctum-proxy/target/release/sanctum-proxy. No remote registry, so the check reports the build date and confirms the binary exists and is executable.

The script supports three output modes: human-readable (default), --json for agent consumption, and --alert for silent-unless-action-needed operation. The --signal flag sends a Signal message to Bert via Yoda’s account if any updates or drift are detected.

A LaunchAgent (com.sanctum.version-check.plist) fires the script every Monday at 7 AM with the --signal flag. If everything is current, nothing happens. If DuckDB is two minor versions behind or DenchClaw has drifted from OpenClaw again, Bert’s phone buzzes before the first coffee.

Home Assistant Self-Healing

Section titled “Home Assistant Self-Healing”The ha-self-healer skill at ~/Projects/openclaw-skills/ha-self-healer/ is Qui-Gon’s most interventionist capability — and the one that most clearly demonstrates the gap between “monitoring” and “actually doing something about it.”

Every 30 minutes, the healer diagnoses the full Home Assistant stack: 55 Tuya smart lights, 4 Ecobee sensors, 4 Ring cameras, Alarmo, and 24 automations. It also checks the native Sonos Bridge (port 18421) which manages 10 speakers outside of HA. It assigns severity levels from 0 (everything is fine) to 4 (the haus is confused about whether its own lights exist), then works through a three-tier remediation pipeline:

- API-level fixes — reload integrations, restart the HA container, re-enable tripped automations. The equivalent of turning it off and on again, except with surgical precision about which thing gets turned off and on.

- UI-level fixes via Playwright — headless browser automation for problems that can only be solved by clicking through the HA interface. Tuya’s OAuth re-authentication is the primary customer here. The browser launches, clicks through the flow, and closes. No human required. The cooldown is 2 hours, because launching a headless browser every 30 minutes to re-authenticate your light bulbs is a line even Qui-Gon won’t cross.

- Escalation to Yoda — when neither API nor UI fixes resolve the issue, Qui-Gon admits defeat and hands the problem to an agent with brain-tier model access. This happens rarely. Qui-Gon keeps statistics on how rarely. He is proud of those statistics.

Two real incidents from the field:

An Ecobee went offline. The healer traced it to a stale HomeKit Controller config entry — a ghost device blocking rediscovery. The API heal stage deleted the stale entry, HA rediscovered the sensors, and four temperature readings reappeared without anyone noticing they’d been gone. Which is the point.

48 Tuya lights went dark simultaneously. The healer diagnosed the failure, saw that every entity failed at the same timestamp, recognized it as a cloud connection drop, and did nothing. No reload. No Playwright. It logged the incident, set severity to 3, and waited. The cloud recovered. The lights recovered. The healer verified and closed the case. Knowing when not to act is the hardest part of automation, and the part most systems get catastrophically wrong.

Technical Specifications

Section titled “Technical Specifications”| Parameter | Value |

|---|---|

| Agent type | efficiency |

| Host | VM (Ubuntu 24.04, systemd user service) |

| Model tier | council-routine (Council MLX local primary, cloud fallbacks) |

| Skills | mac-mini-ops, service-doctor, ha-self-healer, version-watchdog |

| Plugins | sanctum-memory (local, :42069) + Jina Reranker (Graphiti at 127.0.0.1:31416) |

| Workspace | ~/.openclaw/workspace-quigon/ |

| Gateway | OpenClaw (VM), port via systemd user service |

| Schedule | Weekly performance reviews, on-demand infrastructure checks, HA self-healer every 30 min, version watchdog Monday 7 AM |

| Test suites | quigon-infra.sh (12 tests), quigon-resilience.sh (8 tests) |

| Metrics DB | ~/.sanctum/metrics/metrics.db (SQLite) |

| Report output | ~/.sanctum/memory/events/{year}/{month}/perf-review-{date}.md |

| Audit log | ~/.sanctum/audit/audit.log |

Configuration

Section titled “Configuration”Qui-Gon is defined in instance.yaml under the services section:

quigon: enabled: true type: efficiency host: vm workspace: "~/.openclaw/workspace-quigon" model: council-routine skills: - mac-mini-ops - service-doctor - ha-self-healer - version-watchdog schedule: perf_review: weekly ha_self_healer: "*/30 * * * *" version_check: "0 7 * * 1" description: "Efficiency Agent — resource optimization and cost analysis"The test suites live in the shared skills repository:

~/Projects/openclaw-skills/council-router/tests/suites/ quigon-infra.sh # 12 infrastructure tests quigon-resilience.sh # 8 resilience & performance testsPerformance reviews are triggered by cron and write their output alongside an audit trail entry:

~/.sanctum/memory/events/2026/03/perf-review-2026-03-23.md~/.sanctum/audit/audit.log → {"ts":"...","action":"perf-review","period_days":7,...}That workspace markdown is no longer a one-machine snowflake. The canonical IDENTITY.md, SOUL.md, TOOLS.md, and HEARTBEAT.md now live in the raw mlx-finetune workspace and are fanned out mechanically to the runtime copies on the VM, the Mac Mini, and any reachable mobile node. That same canonical markdown is what the overnight fine-tuning pipeline reads, so the trained Qui-Gon and the live runtime Qui-Gon are now supposed to disagree only when the model itself drifts, not because one machine missed a markdown edit.

The Optimization Paradox

Section titled “The Optimization Paradox”There is a version of Qui-Gon that exists in theory — the one where every recommendation is implemented, every inefficiency is resolved, every service runs at exactly its minimum viable resource allocation, and the entire system hums at peak performance like a Swiss train schedule.

In practice, what happens is: Qui-Gon suggests something is 4% suboptimal. The operator reads the report. The operator considers whether the 4% improvement is worth twenty minutes of configuration changes and the nonzero risk of breaking something that currently works. The operator closes the report. Qui-Gon notes this in next week’s review under “deferred recommendations.” The list grows. It always grows.

This is not a bug. This is the fundamental tension of efficiency engineering: the most efficient thing is often to leave the inefficient thing alone.

Qui-Gon will never accept this. That is what makes him useful.