Sanctum Cloud Proxy

When your haus talks to the cloud, it shouldn’t just ask for an API key and hope for the best. Our original sanctum-cloud-proxy was a 100-line Python script that implemented “Military-Grade” security checks: fetching keys securely, tracking costs, and managing fallbacks. It was functional, but it kept the Python interpreter chained to our most critical networking path.

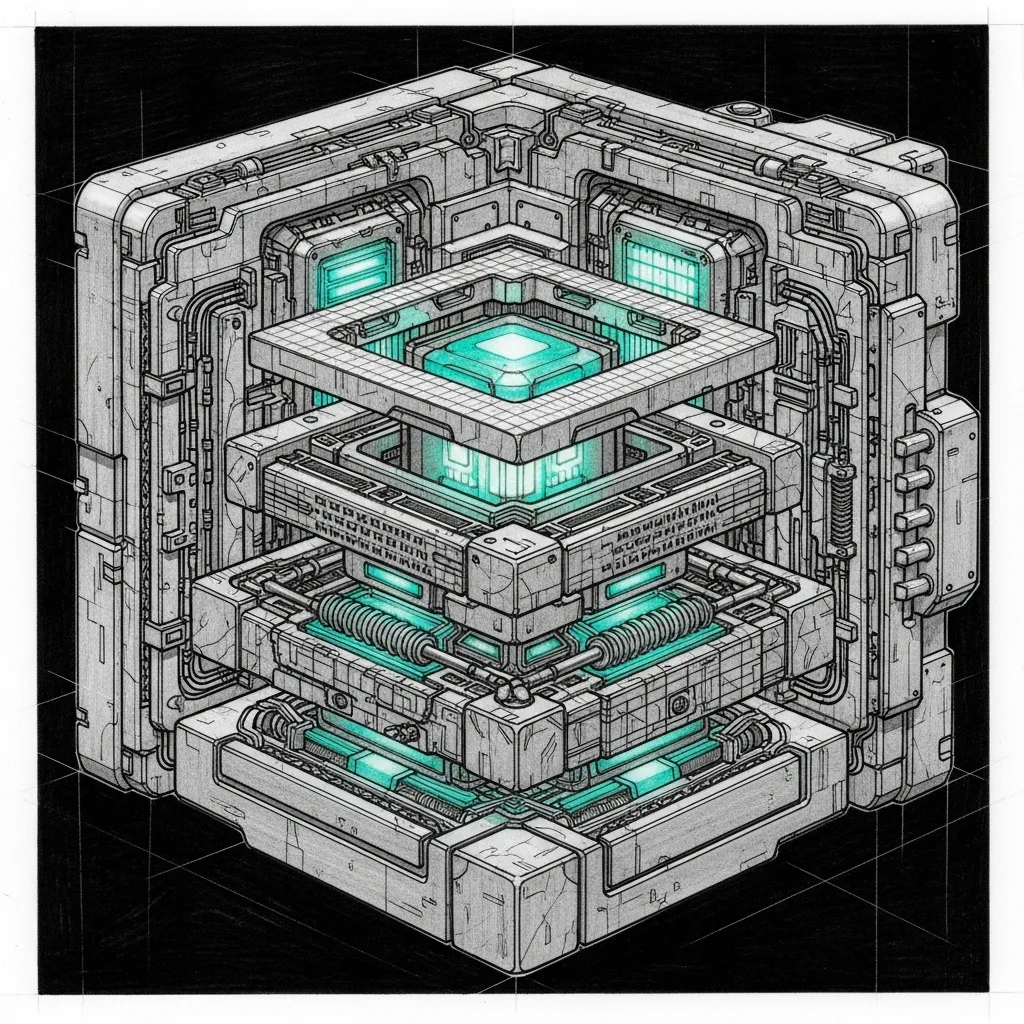

We have ported the entire 5-layer hardening suite directly into the existing sanctum-proxy Rust crate. The Python proxy is dead; the native Rust daemon on port 4040 now does it all.

How It Works

Section titled “How It Works”The sanctum-proxy runs on 10.10.10.1:4040. It intercepts all LLM API requests and subjects them to 5 layers of verification before forwarding them to the external cloud (like Anthropic or OpenRouter) or routing them to local models.

The 5 Layers of Hardening

Section titled “The 5 Layers of Hardening”- Key Security — No API keys exist in source code,

.envfiles, or Git. The proxy securely requests credentials at runtime using the nativesecurity-frameworkRust crate to query the macOS Secure Enclave directly. If a key is missing, it dynamically prompts the user via an AppleScript dialog or CLI to securely store it. - Fallback Chain — If a cloud provider returns a 429 Rate Limit, a 500 Server Error, or times out, the proxy automatically falls back to local models (first

coder-14b, then the localgemma-431B model) without the client ever knowing the cloud failed. - Cost Control (Daily Caps) — The proxy tracks real-time USD spend in memory. It applies hardcoded daily budgets per agent (

windu=$2.00,mothma=$2.00,jocasta=$1.50,default=$0.50). If an agent blows their budget, cloud models are silently dropped from their available routes, forcing them onto local inference. - Audit Trail & Observability — Every single request is logged into a structured JSONL file (

~/.sanctum/logs/cloud-proxy-audit.jsonl). Additionally, the proxy natively exposes a live/statsHTTP endpoint on port 4040, allowing for real-time visibility into who spent what today, without ever needing to parse logs manually. - Rate Limiting — An internal token bucket tracks provider quotas and implements exponential backoff to gracefully handle high traffic spikes.

Why Rust?

Section titled “Why Rust?”LLM routing sits on the critical path for every autonomous action in the Sanctum. The previous Python implementation added measurable latency during HTTP parsing and context switching.

By folding the hardening logic into the sanctum-proxy Rust binary:

- We eliminated the Python interpreter and the

requestslibrary overhead. - Memory usage dropped from ~45MB to ~4MB.

- Concurrency improved massively via the

tokioasync runtime. - The entire routing architecture (both smart intent routing and security hardening) is now unified in one repository,

sanctum-rs.

Configuration & Dynamic Healing

Section titled “Configuration & Dynamic Healing”The proxy loads its initial settings from ~/.sanctum/sanctum-proxy/config.yaml, but the architecture incorporates “Apple-like” intelligence:

- Zero-Config Hot Reloading: Changes made to the

config.yamlfile are picked up instantly via thenotifycrate, seamlessly swapping the new configuration into memory (viaarc-swap) without ever dropping an active Server-Sent Event (SSE) stream. - Zero Trust Architecture: The proxy does not trust the agents. It intercepts requests, verifies the agent’s identity via headers or endpoint routing, and calculates the cost independently. If an agent tries to hallucinate a cheaper model name to bypass its budget, the proxy will catch it and bill the actual tokens used.