Sanctum Proxy

The Sanctum Proxy is a 4.6MB Rust binary that routes every LLM request in the platform. It replaced a Python LiteLLM stack that has since been fully removed from the codebase — every reference, variable name, and config entry scrubbed in March 2026. The Rust proxy does the same job with less RAM, less latency, and zero opinions about garbage collection.

It listens on port 4040. It knows about 20 models across 4 providers. It maintains 16 fallback chains. And it has a budget system that will cut off an agent mid-sentence if the math says so. The proxy does not negotiate. The proxy does not feel bad about it.

What It Does

Section titled “What It Does”Every agent, every Claude Code session, every voice query — all of it flows through this binary. One process. No microservice archipelago. Just a single Rust daemon standing between your agents and the cloud providers who bill by the breath.

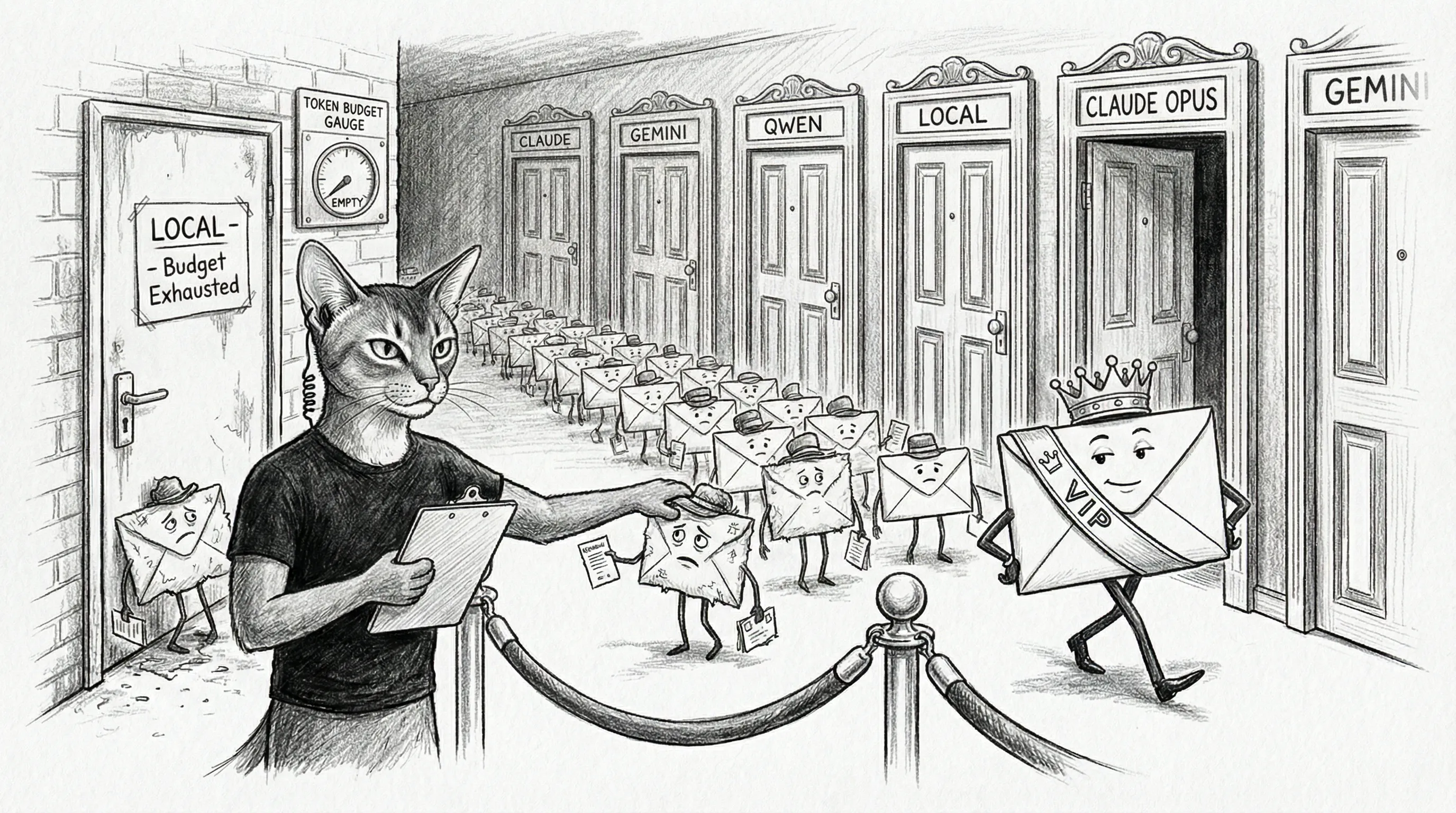

The proxy receives an OpenAI-compatible request, decides which provider should handle it, transforms the request to match that provider’s format, streams the response back, and tracks every token spent. Think of it as a bouncer, a translator, and an accountant fused into one very small, very fast organism.

Config-driven. One config.yaml file declares the models, the fallback chains, the quality tiers, and the budget limits. Change the file, restart the binary, new reality. No database. No state directory. Just a YAML file and convictions.

Performance Benchmark

Section titled “Performance Benchmark”The Rust proxy is built for absolute minimum overhead. In a local stress test using ApacheBench (ab -n 50000 -c 100), the proxy’s core HTTP event loop achieved staggering results:

- 142,000+ Requests Per Second (mean)

- Sub-Millisecond Latency: 0.7ms mean response time

- Zero Drop Rate: 50,000 requests processed with 100 concurrent connections and zero failures.

- P99 Latency: 2 milliseconds

This means the sanctum-proxy adds virtually zero measurable overhead to your LLM API calls. Your bottleneck is purely the speed of light to the cloud providers or the inference speed of your local models. The routing layer itself is essentially frictionless. John Carmack would indeed be proud.

The 6-Layer Routing Engine

Section titled “The 6-Layer Routing Engine”When a request arrives, it falls through six layers of increasingly opinionated decision-making. The first layer that has a strong opinion wins. The others shut up.

Layer 1 is the velvet rope. claude-opus-4-7 and council-secure are tier 0 — they go where they’re told, always. The proxy does not second-guess these requests. It does not optimize them. It delivers them with the quiet deference of a butler who knows better than to suggest a cheaper wine. Tier 0 gets the real thing. Tout sauf de l’ostie de root beer. If the agent asked for Opus, Opus is what the agent gets.

Layer 6 is the bouncer. When an agent exhausts its daily token budget, every subsequent request gets rerouted to a local model running on Apple Silicon three feet away. The agent can still think. It just thinks smaller. Like being demoted from a corner office to a broom closet — you can still have ideas, but the ambiance has changed.

Budget System

Section titled “Budget System”Five AI agents sharing one wallet. What could go wrong.

Each agent gets a token bucket backed by a lock-free AtomicI64. No mutexes. No contention. Five agents can burn tokens simultaneously and the counters stay correct because atomic operations don’t have feelings about fairness — they just increment. This is, incidentally, the ideal personality for anything handling money.

The system tracks Claude Max free-token allocation separately, because free tokens are the most dangerous kind. They feel infinite until they aren’t. Free tokens get spent first. When they’re gone, paid tokens start. When those hit the daily ceiling, the circuit breaker fires and all traffic diverts to local models. The party’s over. Everyone goes haus to Apple Silicon.

Every token transaction writes to a JSONL log via an async mpsc channel. Non-blocking. Batch-flushed. The budget system never slows down a request to write a receipt. It’s the financial equivalent of speed-running your accounting — technically correct, delivered at wire speed, with the audit trail written in the margins.

The Claude Code Incident

Section titled “The Claude Code Incident”The proxy was built for agents. Then Claude Code showed up — a different species of client entirely, with different assumptions about authentication, model naming, and the basic social contract of an API request. The proxy failed in two ways that 52 passing tests didn’t catch. The tests were green. The system was on fire. This is, apparently, what progress looks like.

Here’s how it went down.

Claude Code sends model IDs like claude-haiku-4-5-20251001. The proxy knew about 17 models. It had strong opinions about all of them. This was not one of them. Unknown model. Door’s closed. Go away.

Meanwhile — and this is the part where the proxy became the kind of helpful that gets people killed in horror movies — Claude Code authenticates with an OAuth token via the authorization header. The proxy, in an act of breathtaking helpfulness, replaced it with its own API key. It saw a perfectly valid credential, thought “I know a better one,” and swapped it out like a valet who decides your car keys would work better if they were his car keys.

The request arrived at Anthropic carrying credentials from a different billing context. Anthropic, with the warmth of a bank teller who has just been handed someone else’s driver’s license, declined the transaction.

Two bugs. Both invisible to the test suite. Both obvious in retrospect — which is, of course, the only time anything is obvious.

The fix: auto-passthrough for any claude-* model not in the config (no routing, no transformation, just forward it and keep your hands to yourself). Auth priority: client OAuth token wins over proxy API key, always, no exceptions, no helpful overrides. Seven new integration tests that send requests the way Claude Code actually sends them, not the way the proxy wished they would.

Streaming Token Tracking

Section titled “Streaming Token Tracking”This is the bug that earned its own council session. Five AI agents convened in the digital equivalent of a smoke-filled room, argued about streaming protocol semantics with the intensity of constitutional scholars debating a comma, and voted unanimously to deploy a fix immediately rather than shadow-test it. When the robots skip their own safety protocol because the situation is that urgent, you should probably pay attention.

The problem was quiet and catastrophic. Streaming SSE responses — the kind Claude Code sends for every single interaction — were returning zero tokens. The proxy faithfully logged input_tokens: 0, output_tokens: 0 for the most expensive traffic in the entire system. The budget circuit breaker, whose sole reason for existence is to notice when money is being spent, watched Claude Code burn through token allocations and reported: all clear. Everything’s fine. Nothing to see here. The meter is definitely running. It just reads zero.

The council’s verdict was succinct and damning: “This is not a tracking bug. It is a budget circuit breaker that does not fire.”

A budget system that can’t see your most expensive traffic isn’t a budget system. It’s a decorative gauge on the dashboard of a car with no brakes.

The fix: StreamUsageExtractor, a state machine that captures token counts from the final events in an SSE stream. Two-phase for Anthropic (input tokens arrive in message_start, output tokens in message_delta). Single-phase for OpenAI-format providers, with stream_options: {"include_usage": true} injected into the request. If the connection drops before the final event, it estimates from content bytes and marks the entry source: "estimated" — because an honest guess beats a confident zero.

Hard cap: 50 concurrent tracked streams. After that, new streams get estimated rather than buffered. The proxy protects itself from its own diligence. Even accounting has limits.

Automated Model Discovery (Model Scout)

Section titled “Automated Model Discovery (Model Scout)”Because the proxy maintains static YAML configurations, staying ahead of new AI models could easily become a manual chore. To prevent this, Sanctum leverages a dedicated model-scout background service running on the Mac.

The scout wakes up every Monday at 06:23 (via com.sanctum.model-scout.plist) and pings the OpenRouter and Google AI APIs. It parses the resulting JSON payloads against the current config.yaml state to dynamically rate models you aren’t currently using. The scoring algorithm assigns points based on low input/output token costs, massively expanded context windows, and recent release timestamps.

When interesting candidates—such as a new zero-cost tier or a highly capable $2/M context giant—are detected, two things happen:

- Memory Vault Report: A structured Markdown event digest is deposited directly into

~/.sanctum/memory/events, containing score breakdowns and cost metrics for offline observation. - Jedi Council Orchestration: Using the

council-router, the scout automatically fires a formalnormalpriority request directly to Qui-Gon (the infrastructure agent on the VM). Qui-Gon is explicitly instructed to review the new metrics and independently convene the rest of the Jedi Council to vote on structural proxy upgrades.

Configuration

Section titled “Configuration”The proxy reads a single config.yaml at startup. No hot-reload. No live patching. No “let me just tweak this one value in production.” Change the file, restart the binary, twelve seconds of downtime. The agents will survive. They’ve been through worse — see literally every other page in this documentation.

# ~/.sanctum/sanctum-proxy/config.yaml (abbreviated)listen: "0.0.0.0:4040"

providers: anthropic: base_url: "https://api.anthropic.com" openrouter: base_url: "https://openrouter.ai/api/v1" gemini: base_url: "https://generativelanguage.googleapis.com" local: base_url: "http://localhost:1234"

models: claude-opus-4-7: provider: anthropic quality_tier: 0 # never reroute smart_route: false council-brain: provider: anthropic quality_tier: 1 smart_route: true fallback: [council-code, council-mlx] council-code: provider: local quality_tier: 1 smart_route: false fallback: [council-mlx] council-ops: provider: local quality_tier: 2 smart_route: true fallback: [council-mlx]

budget: daily_limit_per_agent: 500000 claude_max_free_daily: 500000 circuit_breaker: trueNotice smart_route. That flag is a scar from v0.1. The original code had a hardcoded list of routable tiers buried in route.rs — a little secret the config file didn’t know about. Config said one thing. Code did another. The config lost, quietly, for weeks, while everyone stared at the YAML and wondered why tier 2 models weren’t routing correctly. Now smart_route: bool lives in the YAML where it belongs, and the code reads it without editorial comment. Trust issues, resolved through explicit declaration. Therapy for software.

The important practical split is this: council-brain (Opus 4.7 cloud) is the default brain, but it’s smart-routed — security and tool sessions stay on Opus 4.7, code drops to the local Coder-14B (council-code), general chat drops to the local Qwen 3.5 35B (council-mlx) so nothing small-talk-shaped leaves the haus, brainy questions (reasoning verbs or long-form prose) are promoted to Opus 4.7 with --effort max via the Claude Team CLI bridge (council-max-thinking on :2001), and images go to Gemini 3.1 Pro. The current council-brain cross-provider fallback chain is GLM 5.1 → Qwen 3.6 Plus. council-secure (Gemma4+LoRA local) handles Cilghal and Mundi’s domain-specific work. The proxy does the triage. Every decision is visible in config and in usage.jsonl instead of buried in agent prompt folklore.

Claude Code Startup Preflight

Section titled “Claude Code Startup Preflight”Claude Code is no longer treated as a polite client that will either have valid auth or fail quietly in a corner. It has a dedicated startup preflight because the real-world failure mode was too annoying to leave to chance.

The moving parts are:

- Claude Code points

ANTHROPIC_BASE_URLathttp://127.0.0.1:4040 - the proxy reads

anthropic-api-keyfrom macOS Keychain through~/.sanctum/scripts/proxy-launcher.sh - the local

claudecommand is wrapped by~/.local/bin/claude-wrapper - that wrapper runs

tools/claude_session_preflight.shbefore executing the real Claude binary

The preflight checks whether the Claude Team token in ~/.openclaw/agents/main/agent/auth-profiles.json and the Keychain copy under anthropic-api-key are:

- present

- synchronized

- actually valid against Anthropic

If the token is invalid, it calls tools/refresh_claude_team_token.sh --refresh, which:

- runs

claude setup-token - opens the Claude Team OAuth page in the operator’s default browser

- prompts for the returned code in the same terminal session

- can optionally use

agent-browserfor the hermetic automation path - syncs the refreshed token back to Keychain

- restarts

com.sanctum.proxy

This is not part of the proxy binary itself. That is deliberate. The proxy should route requests, not grow a browser and start roleplaying as an OAuth session manager. The preflight sits at the session boundary, where the repair can happen before the first real request hits the proxy and dies with invalid x-api-key.

The repair path also has its own local E2E harness:

bash ~/Documents/Claude_Code/tests/test-claude-team-refresh-e2e.shThat test swaps Anthropic out for a local file:// auth page and a fake setup-token binary, then proves the URL capture, browser click-through, code handoff, token sync, and proxy restart all work end to end. This is a much better way to debug the plumbing than waiting for a real token to expire and hoping the identity provider is in a cooperative mood.

Test Coverage

Section titled “Test Coverage”The coverage now sits beside live checks for local LM Studio reachability, MLX idle-managed routing, fallback resilience, Claude Code OAuth passthrough, and the local Claude Team auth-recovery harness. Because if your local tiers are part of the promise, they belong in the test count and in the blame when they break.

The tests live in the service-doctor skill because the proxy is a service and the doctor makes haus calls. It’s also the only medical professional in the system that doesn’t charge by the token.

# Run the full suitebash /Users/neo/Projects/openclaw-skills/service-doctor/tests/test-proxy.sh

# Expected: 66 passed, 0 failed, 4 skipped