sanctum-cli

Mission

Section titled “Mission”sanctum is to a Sanctum host what kubectl is to a Kubernetes cluster: one terminal binary that turns a heterogeneous control plane and a pile of bespoke shell scripts into a single composable CLI.

It does three things, all of which used to be scattered across half a dozen scripts and zero good UX:

- Routes prompts to the right model — Claude (via the local Max-subscription proxy by default, or direct API), Gemini for spatial/vision, MLX-local as offline fallback. The router is a pure function of

intent × attachments × flags × instance.yaml. Every dispatch is logged with the rule that fired. - Walks operators through painful provisioning — cloud backups (Cloudflare R2 default, Backblaze B2, Google Drive, GitHub Tier 0), credential rotation, first-run onboarding. Resumable wizards with regex-validated paste, atomic instance.yaml writes, and round-trip verification before declaring success.

- Reports honest health —

doctorprobes everycom.sanctum.*LaunchAgent, the configured providers, and the restic repos. Brevity-gated by default (one line on quiet, expanded only on real findings).

Why this needed to exist

Section titled “Why this needed to exist”The 2026-04-26 backup migration took ~90 minutes of manual click-ops to wire up Google Drive: create Cloud project, enable Drive API, configure OAuth consent screen, create OAuth client, publish app, click through “unverified app” warning, paste credentials into rclone, reconnect, retry. Every one of those steps had a non-obvious failure mode.

If “Sanctum power user” is a real audience — anyone who’s not Bertrand running this in their own home — that flow had to collapse from 90 minutes to under 10. The CLI’s cloud setup wizard exists exactly to wrap each painful click in a step that opens the right URL, validates pasted input live, and recovers from known failures with a clear next action. The R2 wizard does it in ~3 minutes; the GDrive wizard does it in ~10.

Design doctrine

Section titled “Design doctrine”| Pillar | Means |

|---|---|

| Closed-loop | Every operation either completes and verifies or rolls back and reports. Every wizard ends with a round-trip canary that proves the wiring works. |

| Honest | Telemetry reflects reality. Provider rate-limited? Says so. Route was overridden? Surfaced. The estimator says “11 GB raw” without claiming dedup will fix it; only the live --dry-run reveals the actual stored size. |

| Bounded | Each command has a documented worst-case latency. No surprise cloud charges. |

| Defense-in-depth | Credentials only in macOS Keychain, never on disk. Pre-commit secret scanner refuses to push anything matching 16 well-known credential patterns. Schema validation at every config boundary. |

| Discovery-first | No hardcoded IPs, ports, hostnames. Everything via ~/.sanctum/instance.yaml or runtime discovery. |

| Brevity by default | sanctum with no args returns a one-liner. Verbose only when something is wrong or -v. |

| Python now, Rust later | Built in Python with full type hints + tests. Dispatcher hot path will be promoted to Rust at v1.0+ (per language-maturity doctrine). |

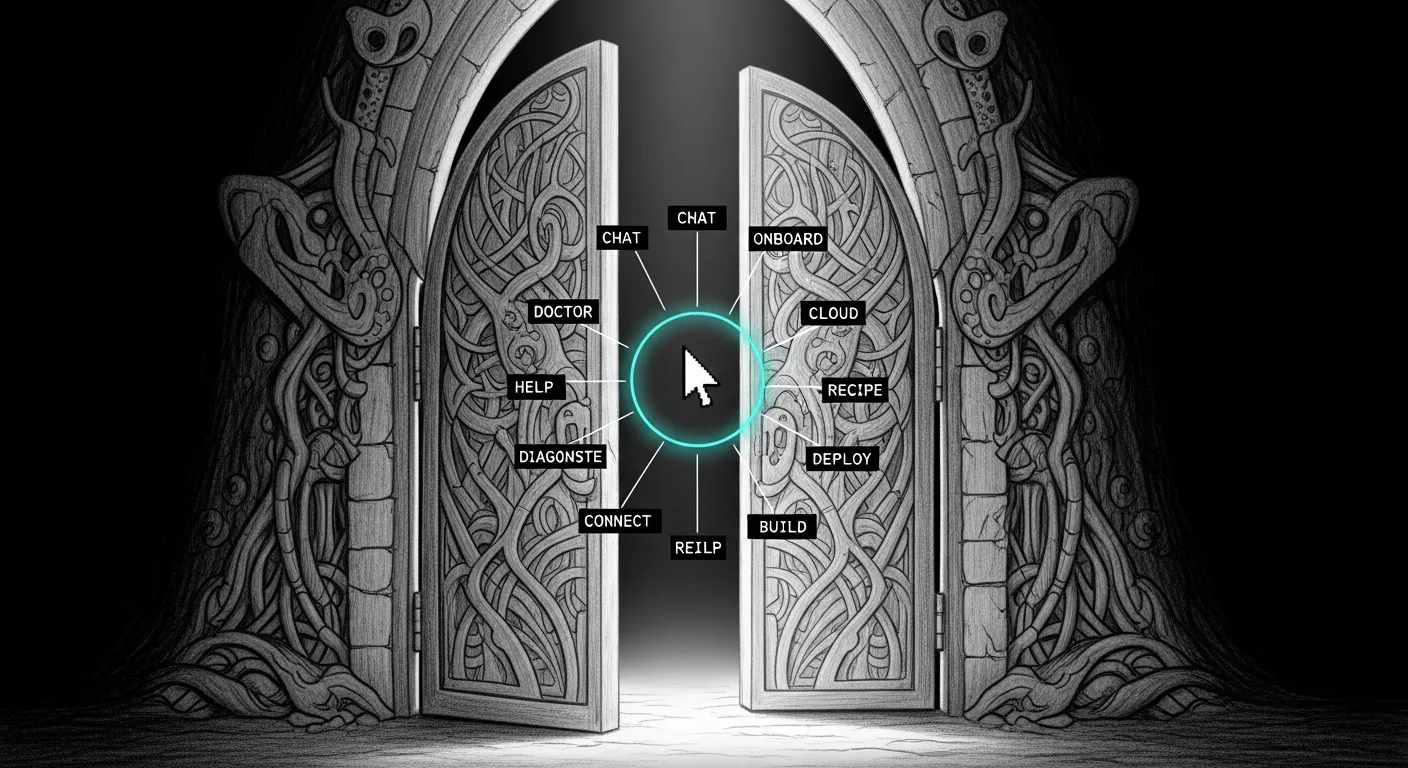

CLI surface

Section titled “CLI surface”The 12 top-level commands shipped:

sanctum # one-liner statussanctum onboard --recipe family # one-shot first-run wizard

sanctum chat "..." # router decides; default Claude (Max subscription)sanctum code "..." # forced Claudesanctum vision <file> "..." # forced Gemini, multimodal

sanctum cloud setup [--backend r2|b2|gdrive|github]sanctum backup recipes # list recipes (family / operator / code)sanctum backup estimate <recipe> # raw size + R2-free-tier comparesanctum backup run [--recipe NAME] [--dry-run]sanctum backup snapshots / verify / restore

sanctum doctor [--full|--json]sanctum agent [list|status|start|stop|restart|logs]sanctum proxy [status|restart|logs]sanctum keychain [list|test|rotate]sanctum config validateCloud backends

Section titled “Cloud backends”| Backend | Tier | Setup time | Free quota | Egress |

|---|---|---|---|---|

| r2 (default) | Tier 1 — restic-encrypted | ~3 min | 10 GB / 1 M class A / 10 M class B | $0 always |

| b2 | Tier 1 — restic-encrypted | ~3 min | none ($0.005/GB) | 3× stored free |

| gdrive | Tier 1 — restic-encrypted | ~10 min | up to 30 TiB if AI Ultra | n/a |

| github | Tier 0 — public-safe configs | ~1 min | unlimited private repos | n/a |

The R2 + GitHub combo is the productization sweet spot: GitHub Tier 0 holds dotfiles + brew lists + sanctum LaunchAgent plists ($0); R2 Tier 1 holds documents + secrets ($0 for the typical lambda-family scope under 10 GB after dedup); Photos library stays in iCloud Photos (Apple’s job, the wizard auto-detects + excludes the bundle).

Backup recipes

Section titled “Backup recipes”A recipe is a named bundle of sources + excludes for an audience:

| Recipe | Audience | Sources | Typical post-dedup size |

|---|---|---|---|

| family | Lambda haushold | Documents, Desktop, ssh, dotfiles | ~5 GB (fits R2 free) |

| operator | Sanctum host | .sanctum, .openclaw, .claude, dev projects | ~15 GB+ (T9 + GDrive) |

| code | Source-only | ~/Projects | varies (pairs with GitHub Tier 0) |

User-defined recipes go in instance.yaml under cli.recipes; built-ins are overridable. iCloud Photos auto-detection adds the exclude pattern automatically when the bundle is present.

Shipped versions

Section titled “Shipped versions”- v0.1 — foundation: config schema, pure router, telemetry, Keychain wrapper, exit-code taxonomy,

status+config validate - v0.2 — providers: Claude (direct API), Gemini, MLX-local;

chat;doctor - v0.3 — backup commands;

codeshortcut; B2 cloud-setup wizard - v0.4 —

vision(multimodal Gemini);agent;proxy;keychain; GDrive wizard - v0.5 — R2 wizard with hand-rolled SigV4; R2 becomes the default backend

- v0.6 — Claude via Max-subscription proxy (default —

sanctum chat -p claudeis now $0 marginal); R2 secondary-slot promotion - v0.7 — backup recipes (family / operator / code);

estimatewith R2-free-tier compare; iCloud Photos auto-detection - v0.7.1 — GitHub Tier 0 backend;

sanctum onboardsuper-command; 16-pattern secret scanner

What’s next — v0.8 (May target)

Section titled “What’s next — v0.8 (May target)”These are packaging and polish, not architecture. The architecture is done.

| Item | Why | Effort |

|---|---|---|

| brew formula + tap | brew install sanctum-cli for the public install path. Tap published from a homebrew-sanctum repo. | ~half-day |

| Sigstore-signed releases | Self-update path verifies signatures before swapping the binary. Required before recommending public install. | ~half-day |

| Atomic-replace flow for cloud_backup | Wizard currently refuses if both slots are full. The replace flow walks the operator through retiring an old target + wiring a new one safely. | ~half-day |

| Storj / S3 / MinIO / Local-NAS in cloud setup | R2 covers most users; these are escape hatches for sovereignty-maximalists. Mostly endpoint changes on top of the existing S3-compat code path. | ~day |

TUI dashboard (sanctum dashboard) | Live tail of telemetry + LaunchAgent state. Textual-based; the wow factor for power-user demos. | ~day |

| README + demo gif | Public-facing launch surface. The CLI is the interface with the geeks; the README is the front door. | ~half-day |

| Rust dispatcher port | Per language-maturity doctrine, promote the hot path once the surface is stable. v1.0 milestone. | multi-week, deferred |

Productization shape

Section titled “Productization shape”For new users, the recommended flow is sanctum onboard --recipe family --yes:

- Photos-scope notice (sets correct expectations).

- Pre-flight estimate vs. R2 free tier.

- R2 cloud setup wizard (3 hex-string pastes + a bucket auto-created).

--dry-runfor transparent preview.- Real backup.

- Restore canary against

~/.zshrcto prove the round-trip. - Done —

sanctum doctorandsanctum backup snapshotsfor verification.

Total time: ~3-5 minutes. Total cost: $0 for any backup repo under 10 GB. Photos remain Apple’s responsibility via iCloud Photos.

See also

Section titled “See also”~/Projects/sanctum-cli/SPEC.md— canonical design doc- language-maturity — Python → Rust transition pattern

- engineering-discipline — discovery-first config rule

- backup-restore — the backup architecture this CLI productizes

- Roadmap — Phase 2 + sanctum-cli v0.8